5.1 Diffusion Profile and Skin Translucency

In Episode 3, I have explained the usage of diffusion profile in subsurface scattering. The multipole model reducing the problem into sum of Gaussian functions is a good approximation. Since the BSSRDF is a generic function for both reflected and transmitted light, we can also use it for calculating the translucency color of skin as a result of subsurface scattering inside skin tissues. The only difference is that when we calculate the reflectance caused by local subsurface scattering, we only consider light at the same side as the normal; in this case, the light source is at back. Therefore, to calculate the radiance color, we only need to know the irradiance at back and the distance light travelled in the skin. However, for translucency the biggest problem is that theoretically, it is hard to determine the distance travelled and incident point at the back side since the geometry of the human skin is actually complex.

5.2 Approximation Method

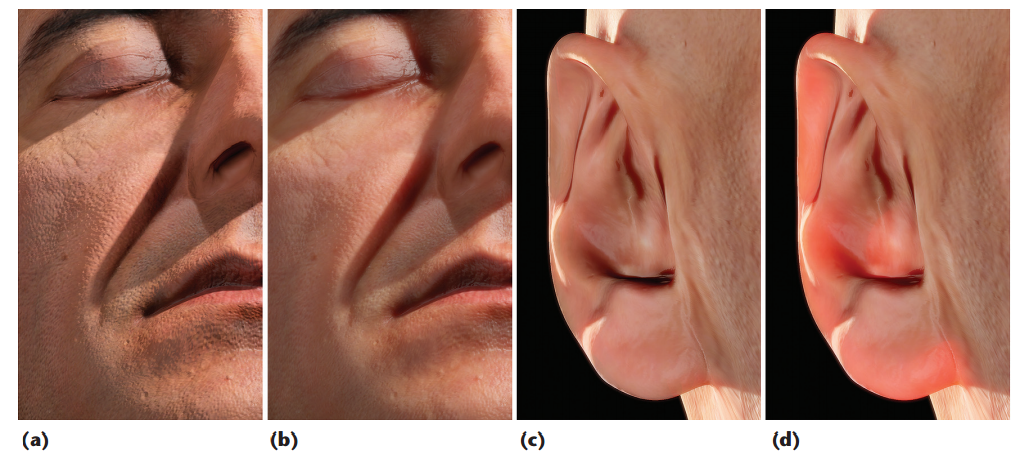

In a 2010 paper by Jimenez et al. on real-time skin translucency, an approximation method is raised. Noticing that the translucency factor is more obvious in thinner parts like ear, we can solve the problem by using the inverse normal of the point of shading to approximate the normal direction of the incident point at back.

For direct lighting, the irradiance is simply the dot product of inverse normal and light direction. For image-based lighting, we can use the inverse normal to fetch from irradiance map.

For the distance travelled by light in skin, Jimenez et al. also uses an approximation – they simply ignored the refraction, using the distance travel in the skin region by straight light that starts from light source and ends at the shading point as if there is nothing in the middle, which requires a depth map from the light’s perspective.

5.3 Transmittance Map

Actually, we can approximate further. We can avoid rendering depth maps and use average thickness instead. An idea is sampling distances in skin by ray tracing from all (-90° to 90°) different incident (or departing) angles and store the value in a look-up texture. The ray tracing may not be easy if we implement it manually. Luckily, there are many software solutions that can bake such map from an input mesh. One of such software is Knald, in which the aforementioned map is called transmittance map.

However, after fetching from texture, we may want to do some inversion and scaling or add an offset to translate the texture value to real length.

Combing the aforementioned factors, the final transmittance color is

.

.

The value must be added on the reflectance value, since the light sources are different. It is worth noticing that because we don’t know the transitivity (ratio of transmitted light) of skin, we can empirically determine a scaling coefficient by the actual rendering effects.

References

Jimenez, J., Whelan, D., Sundstedt, V., & Gutierrez, D. (2010). Real-time realistic skin translucency. IEEE computer graphics and applications, (4), 32-41.

List of Figures

Figure 14: (a) without / (b) with local subsurface scattering (c) without / (d) with translucency. Source: screen capture of online pdf of Real-time realistic skin translucency by Jimenez, J. on http://iryoku.com/translucency/downloads/Real-Time-Realistic-Skin-Translucency.pdf.

Figure 15: Transmittance map. Source: screen capture of a transmittance map generated by Knald.